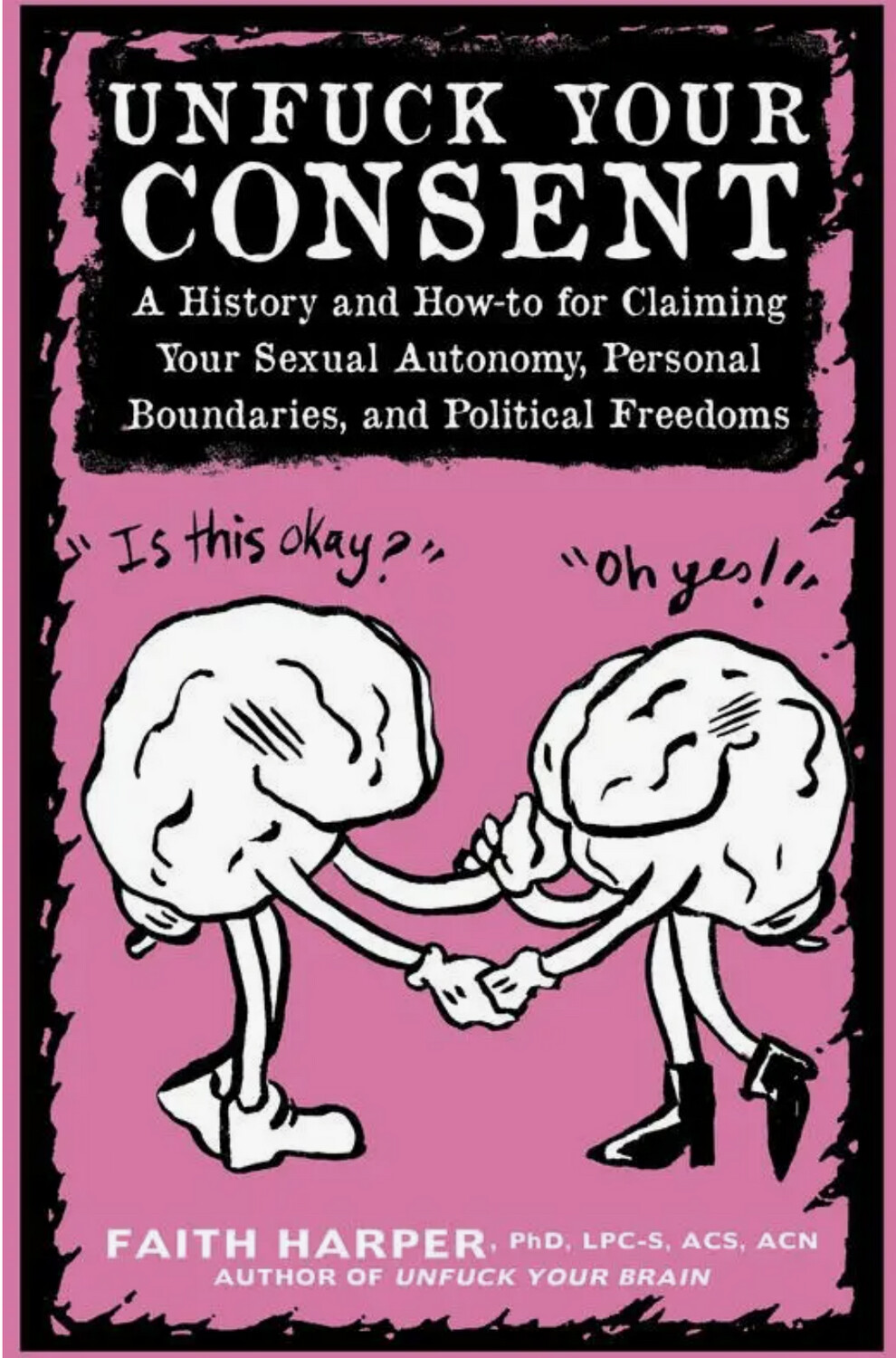

Unfuck Your Consent - Zine by Dr. Faith G. Harper

9781621065012

$4.95

Out of stock

1

Product Details

What does consent mean? Where does this idea come from, and why is it being talked about in a different way now than it was 20 years ago? More importantly, what does it have to do with any of us? How do we make sure we have the informed consent of everyone we interact with for the stuff we do that affects them? How do we make sure other people know what is and isn't okay with us? How do we navigate life in the post-#metoo era with dignity, respect, and confidence?

Save this product for later

Display prices in:

USD